- Posts: 89

Domain decomposition problem

Domain decomposition problem

- James Starlight

-

Topic Author

Topic Author

- Visitor

8 years 1 month ago #5445

by James Starlight

Domain decomposition problem was created by James Starlight

Hello,

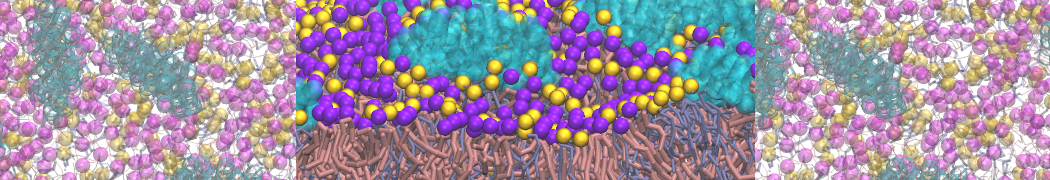

I have faced with the problem of running CG simulation on cluster. In particular case I made Martini parametrization of the GPCR embedded within bilayer and successfully tested this system on my local machine with GMX 4.5 following tutorial.

Then I was tried to submit gromacs job on 64 nodes on mu local cluster using GMX 4.5 with mpiexec obtaining error

Program g_mdrun_openmpi, VERSION 4.5.7

Source code file:

/builddir/build/BUILD/gromacs-4.5.7/src/mdlib/domdec.c, line: 6436

Fatal error:

There is no domain decomposition for 64 nodes that is compatible with

the given box and a minimum cell size of 2.6255 nm

Change the number of nodes or mdrun option -rcon or your LINCS settings

Next I tried to increase size of the system putting twisely bigger bilayer

unfortunately I that sim also dint start

Program g_mdrun_openmpi, VERSION 4.5.7

Source code file:

/builddir/build/BUILD/gromacs-4.5.7/src/mdlib/domdec_con.c, line: 693

Fatal error:

DD cell 0 2 1 could only obtain 0 of the 1 atoms that are connected

via vsites from the neighbouring cells. This probably means your vsite

lengths are too long compared to the domain decomposition cell size.

Decrease the number of domain decomposition grid cells.

For more information and tips for troubleshooting, please check the GROMACS

website at www.gromacs.org/Documentation/Errors

Is it possible to modify some mdp options for those runs or it will be better to switch to 5.0 GMX instead7

Thanks for help!

J.

Thanks!

I have faced with the problem of running CG simulation on cluster. In particular case I made Martini parametrization of the GPCR embedded within bilayer and successfully tested this system on my local machine with GMX 4.5 following tutorial.

Then I was tried to submit gromacs job on 64 nodes on mu local cluster using GMX 4.5 with mpiexec obtaining error

Program g_mdrun_openmpi, VERSION 4.5.7

Source code file:

/builddir/build/BUILD/gromacs-4.5.7/src/mdlib/domdec.c, line: 6436

Fatal error:

There is no domain decomposition for 64 nodes that is compatible with

the given box and a minimum cell size of 2.6255 nm

Change the number of nodes or mdrun option -rcon or your LINCS settings

Next I tried to increase size of the system putting twisely bigger bilayer

unfortunately I that sim also dint start

Program g_mdrun_openmpi, VERSION 4.5.7

Source code file:

/builddir/build/BUILD/gromacs-4.5.7/src/mdlib/domdec_con.c, line: 693

Fatal error:

DD cell 0 2 1 could only obtain 0 of the 1 atoms that are connected

via vsites from the neighbouring cells. This probably means your vsite

lengths are too long compared to the domain decomposition cell size.

Decrease the number of domain decomposition grid cells.

For more information and tips for troubleshooting, please check the GROMACS

website at www.gromacs.org/Documentation/Errors

Is it possible to modify some mdp options for those runs or it will be better to switch to 5.0 GMX instead7

Thanks for help!

J.

Thanks!

Please Log in or Create an account to join the conversation.

- mnmelo

-

- Offline

- Admin

Less

More

8 years 1 month ago #5451

by mnmelo

Replied by mnmelo on topic Domain decomposition problem

Hi!

One mdrun flag you probably need to set isThis will tell mdrun to communicate some more particles than the default across neighboring DD cells.

Let us know if this helps.

Manel

One mdrun flag you probably need to set is

-rdd 1.4Let us know if this helps.

Manel

Please Log in or Create an account to join the conversation.

Time to create page: 0.090 seconds